Stop Settling for Generic — The Prompting Framework That Changes Everything

START HERE — THE ANSWER YOU WERE SEARCHING FOR

If you searched ‘why are my AI outputs so generic’ or ‘how to get better results from AI at work’ — you are in exactly the right place. The short answer is this: AI is not broken. Your prompts are. AI language models are extraordinarily capable tools, but they are only as good as the instructions you give them. Feed them a vague, Google-style question and they will return a statistically average answer — technically correct, entirely forgettable. Feed them precise, context-rich, framework-grounded instructions and the output transforms completely. This article will show you exactly how to make that shift. And if you want a shortcut to start testing these ideas today, a free 25-prompt sampler PDF is waiting for you at the end.

Why Your AI Outputs Sound Like Everyone Else’s

The moment I nearly gave up on AI at work

I will be honest with you. Early on, I nearly lost faith in AI entirely. Not because the technology was bad — but because I could not get it to give me what I actually needed. I was asking questions, refining them, asking more questions, going around in circles. Something that should have taken minutes was taking thirty, forty, sometimes sixty minutes of back-and-forth. And what I ended up with still felt generic. Still felt like it could have been written for anyone, about anything.

The problem, I eventually realized, was not the model. It was me. Or more specifically, it was the way I was approaching it — like a Google search. Type a question, get an answer. But that is not how AI works. And once I understood the difference, everything changed. Research from MIT Sloan confirms that generative AI results depend on user prompts just as much as the underlying model itself. The tool was never the bottleneck. The instructions were.

How generic inputs guarantee generic outputs every single time

AI language models are, at their core, probabilistic pattern-matchers. Feed them an ambiguous prompt and they default to the most statistically probable response — which is, by definition, the most average one. Stanford’s prompting research confirms that the more specific the prompt, the more focused and accurate the response. Think of it this way: if you walked into a restaurant and told the chef to ‘make you something,’ you might end up with a cheese sandwich — not because the chef lacks skill, but because you gave them nothing meaningful to work with. Vagueness invites mediocrity.

The hidden professional cost of settling for generic

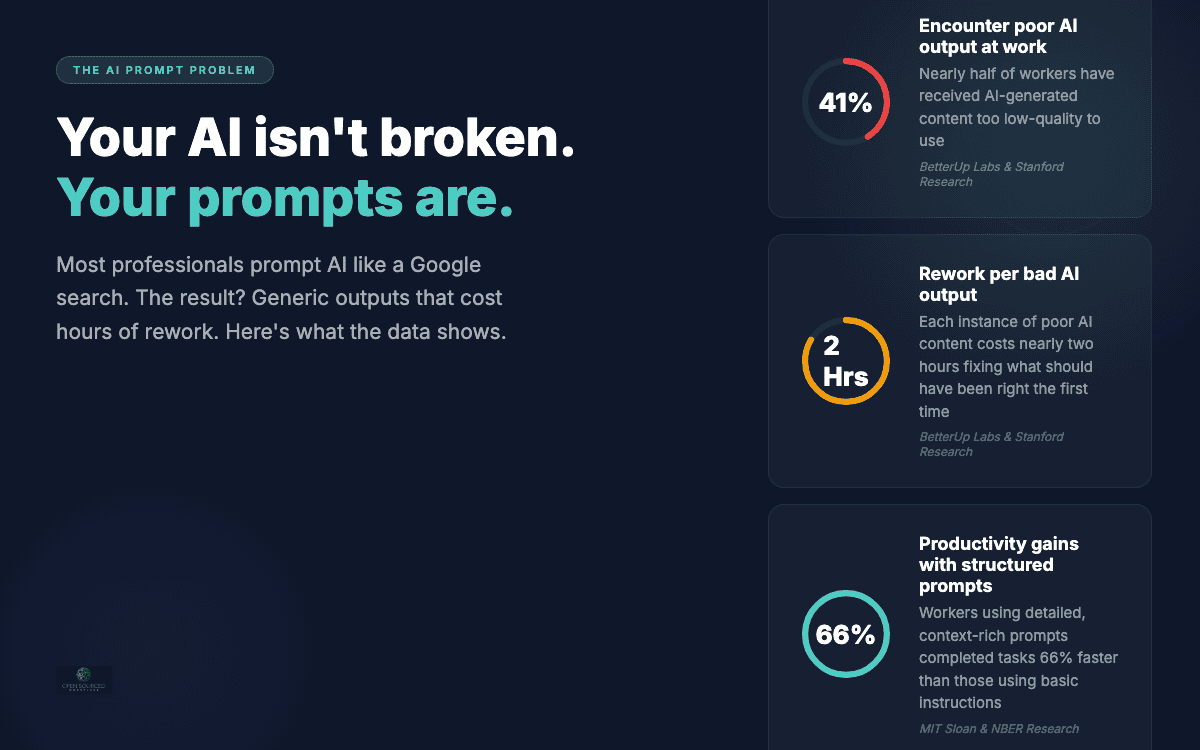

Research from BetterUp Labs and Stanford found that 41% of workers have encountered poor-quality AI-generated output at work, costing nearly two hours of rework per instance. There is a real professional cost to consistently producing AI output that reads like it could have been written by anyone, for no one in particular. Colleagues notice. Managers notice. Clients definitely notice. Prompting well is not just a productivity skill. It is a reputational one.

The Root Cause — We Are All Prompting Like It’s a Google Search

Why the Google habit is working against you

The single biggest shift I had to make was forgetting how I use Google. When we search online, we type fragments — a few keywords, a short phrase — and the search engine does the interpretive work. It fills in the gaps. It guesses what we mean. AI language models do not work that way. They take your instructions literally. They do not infer what you meant. They respond to what you actually wrote. And if what you wrote was vague, they will fill the gaps with the most generic, statistically safe response available.

Most people have not made this mental shift yet. Prompt engineering statistics from 2025 show that while 94% of employees report familiarity with generative AI tools, the vast majority still interact with them like search engines. The familiarity is there. The methodology is not.

The engineer’s mindset that changes the quality of everything

The best writers of AI prompts are engineers and computer scientists — because they know how computers talk. They think in terms of specifications, constraints, inputs, and expected outputs. They do not ask. They define. The rest of us are only beginning to learn that language. And the good news is you do not need a technical background to adopt this mindset. You just need to stop asking questions and start writing briefs. As MIT Sloan’s research notes, you can increase clarity and relevance by specifying the task, providing examples, and outlining rules or constraints. That is engineering thinking applied to language — and it is a skill anyone can develop.

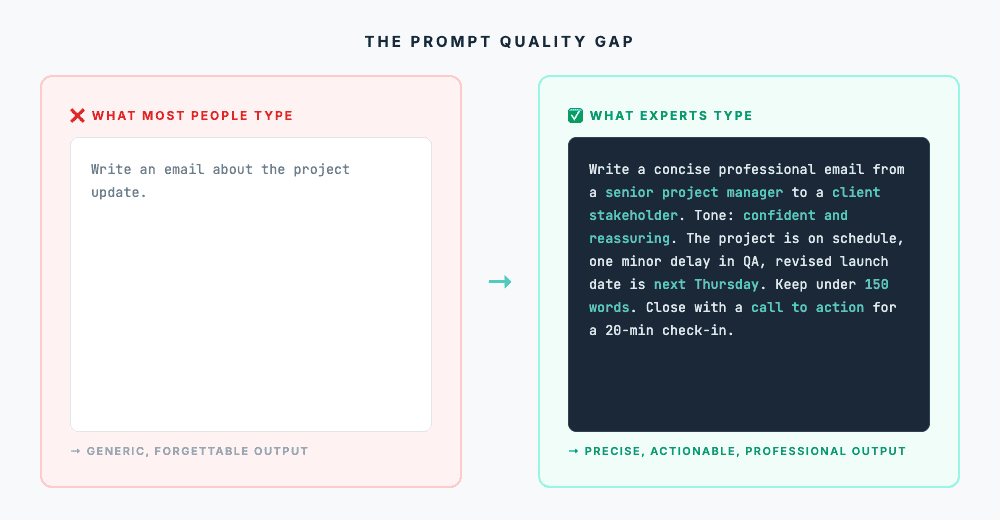

What most people type vs what they should be typing

The average workplace prompt looks something like this: ‘Write an email about the project update.’ It names a format and a topic. That is all. A well-constructed prompt looks more like this: ‘Write a concise professional email from a senior project manager to a client stakeholder. The tone should be confident and reassuring. The project is on schedule, one minor delay occurred in QA, and the revised launch date is next Thursday. Keep it under 150 words and close with a call to action for a 20-minute check-in.’ One prompt outsources all the thinking to the model. The other does the cognitive work upfront and directs the model toward a precise outcome. The difference in output quality is not incremental. It is categorical.

The Context Problem — And How Frameworks Solve It

Why context is the ingredient most people skip entirely

The challenge in the workplace — when you are trying to create something specific for a specific use case — is that you need to provide context. Not just any context. The right context. You need to know what it is, how to structure it, and how to communicate it to a model that has no knowledge of your organization, your team, your history, or your objectives. Without that context, the model fills the gap with generalities. With it, the output becomes something you can actually use.

Context is what transforms a technically correct response into a situationally intelligent one. Tell the model who will read this. Tell it what decision this document needs to support. Tell it what has already been tried or communicated. Tell it what success looks like. The more contextual intelligence you supply, the more contextually intelligent the output becomes.

The consultant framework secret — where the real ROI lives

Here is one of the most powerful and underused insights in workplace AI: we pay consultants enormous amounts of money because they have built frameworks. McKinsey, Deloitte, KPMG, Gartner — whichever consultancy your organization uses. They have developed models and methodologies over decades. Those frameworks are documented. They are proven. They are respected by leadership. And they already exist. McKinsey’s own research on AI’s economic potential estimates the technology could deliver productivity gains worth trillions across industries. But the insight that matters most at an individual level is simpler: you do not need to reinvent the framework. You need the right prompt to apply it.

The method is straightforward. Find the respected industry framework your organization already uses or recognizes. Feed that framework into your prompt alongside your specific workplace context. Ask the AI to apply it to your precise situation. Suddenly the AI has a structural guardrail. It is no longer guessing at what ‘good’ looks like. It is applying a proven methodology to your specific problem. The output shifts from generic advice to genuinely grounded recommendations — the kind that used to require a consultant invoice.

There are real opportunities for organizations to save hundreds of thousands of dollars on consultancy fees simply by learning how to use these tools properly. That is not an exaggeration. Research from the St. Louis Fed found that workers are on average 33% more productive in each hour they use generative AI. Pair that productivity gain with framework-grounded prompting and the compounding effect on output quality — and cost savings — becomes significant.

How to think about the end result before you write a single word

One of the most important shifts I made was learning to think about the end result first, then work backwards. Before I write a prompt now, I ask myself: what does success look like? What format does the output need to be in? Who is going to read it, and what do I need them to feel or decide? Once you have those answers, writing the prompt becomes far more straightforward. You are not searching for an answer anymore. You are defining the destination and asking the AI to help you get there.

The Role, Context, Format Framework

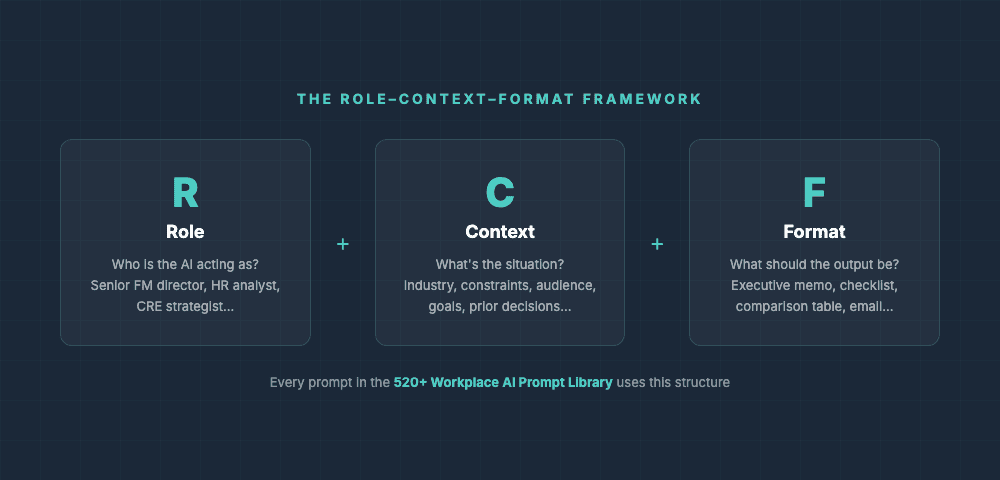

Giving your AI a persona that matches your professional needs

Role assignment is one of the most powerful levers in your prompting toolkit. Harvard University’s AI prompting guidance identifies this as one of the most effective techniques available — asking AI to behave as a type of person or professional helps it emulate that role and tailor its answers accordingly. When you tell the model to behave as a seasoned financial analyst, a plain-language legal drafter, or a no-nonsense executive communicator, you are not just changing the vocabulary. You are reshaping the entire posture of the output.

Format instructions — the detail most people forget

Format instructions are non-negotiable if you want immediately usable output. Specify whether you want bullet points or prose. State the maximum word count. Indicate whether headers are required. Tell the model the register — formal or conversational. Without format instructions, the model defaults to its own structural preferences — which may bear no resemblance to what you actually need. Take thirty seconds to define the format upfront and save yourself ten minutes of post-processing.

Prompting for your industry and your audience

The vocabulary you use in your prompt directly influences the vocabulary that appears in your output. Calibrate your prompting style to the norms of your industry and describe your audience explicitly — their expertise level, their likely objections, their decision-making context. A prompt that says, ‘write this for a procurement manager who is skeptical about vendor changes and has limited time’ will produce something categorically better than one that says, ‘write this for a business audience.’ Research on few-shot prompting consistently shows that providing specific examples — including audience descriptions — can increase output accuracy by 15–40%.

Advanced Techniques That Compound Over Time

Chain prompting — why one message is rarely enough

The assumption that a single prompt should produce a finished output is one of the most limiting beliefs in AI workflows. Google Research’s work on chain-of-thought prompting demonstrates that decomposing multi-step problems into intermediate steps significantly improves model performance. Use one prompt to generate a rough structure, a second to develop the content, a third to refine the tone, and a fourth to tighten the language. Each step compounds on the last. The quality difference between a one-shot prompt and a well-sequenced chain of prompts is dramatic.

Using real examples to get outputs that sound like you

Nothing communicates your expectations to a language model more effectively than showing it precisely what you want. Research on few-shot prompting from arXiv confirms that providing examples improves accuracy substantially — especially for complex tasks. Paste three or four paragraphs of your own writing into a prompt and instruct the model to match the tone, sentence length, vocabulary range, and stylistic character of what you have written. The resulting output will sound far more like you than anything generated from a generic prompt. Your authentic voice is a resource. Feed it to the model.

How to use constraints to force better, more original outputs

Counterintuitively, constraints often improve output rather than diminish it. Telling a model it cannot use passive voice, must begin each sentence with an active verb, or must avoid a specific set of overused phrases forces it into less-traveled syntactic territory. The results are frequently more original, more precise, and more characterful than unconstrained output. Limitation is a remarkably effective creative catalyst.

The iteration habit that separates great outputs from good ones

Treat the first response as a draft, not a deliverable. After your initial output, follow up with refinement prompts: ‘Make the opening more arresting.’ ‘Reduce the word count by 30% without losing the core argument.’ ‘Elevate the vocabulary in the third paragraph.’ Each iteration compounds on the last. By the third or fourth pass, you often have something that bears little resemblance to the uninspiring first draft — and that is precisely the point.

Building a Prompt Library That Works for Your Specific Situation

Why I built over 500 prompts — and refined them down to 360+

When I started building the 360+ Workplace Prompt Library, the starting point was actually much larger — over 500 prompts. But some were repeatable, some were not sufficiently distinct, and some could be combined without losing their value. The thinking behind every prompt that made the final cut was the same: what are people actually trying to do in their everyday job across these functions? I know it because I do it every day. That is my job. Listening to peers within my organization, outside it, and across the industry — that is where I identified the real problems and matched each one to a prompt and a framework designed to fix it.

Every prompt in the library is grounded in a real-world professional challenge. Not a hypothetical. Not a generic scenario. A real problem that real people face in real workplace functions — from HR to corporate real estate, from sustainability to operations. The frameworks included can always be changed. If an organization has a preferred model, they can substitute it. The prompt structure remains. The framework is the variable.

The personal prompt library every professional should be building

Beyond any off-the-shelf library, every professional should be building their own. The best prompts you write today should not be rewritten from scratch tomorrow. Save them. Categorize them by function, audience, and output type. Refine them slightly with each use. Over time, this becomes a compounding professional asset — a set of instruments calibrated to your specific context, your organization, and your way of working. NN/Group research found that AI tools increased business users’ throughput by an average of 66% on realistic tasks. A well-maintained personal prompt library amplifies that gain further still.

Turning your best prompts into shared team standards

A personal prompt library is valuable. A shared team prompt library is exponentially more so. When your best-performing prompts are accessible to everyone on your team, output quality improves collectively and consistently. Prompts become institutional knowledge — refined by multiple contributors, battle-tested across varied use cases, and continuously improved. This is how organizations begin to build genuine competitive advantage from AI tools: not by using them more, but by using them better. Research from Stanford GSB found that productivity improvements from AI tools spread fastest when best practices — not just tools — were shared across teams.

Common Mistakes That Are Quietly Killing Your Output Quality

Asking too many things at once

When you ask a model to simultaneously write a summary, suggest alternatives, create a timeline, and adjust the tone for a different audience, you fragment its attention. The result is almost always a superficial treatment of every element. Decompose complex requests into sequential, focused prompts. One clear objective per prompt. That discipline alone will transform what you get back.

Skipping the format specification

Madison College’s prompting research confirms that offering precise details — type, tone, target audience, and word count — generates the desired output, while omitting them produces generic results. Without a length specification, the model will default to whatever length feels statistically appropriate — which may be three times longer than you need. Without a tone specification, you get a tonal average that fits no specific context well. These are trivial to specify and transformative to include.

Accepting the first response when the third one is always better

The first response is the model’s best guess at what you want. The third response — after two rounds of refinement prompting — is the model’s calibrated approximation of what you actually need. Professionals who accept first drafts from human writers are considered either easily satisfied or poorly organized. The same standard should apply to AI output.

What Happens When You Actually Learn How to Do This

The moment your eyes open

There is a puzzle most people will recognize. How many squares are in a 3×3 grid? Most people say nine. Then someone asks a different question — a question that shifts the angle — and suddenly you can see squares within squares, overlapping rectangles, combinations you had not noticed. The answer was never nine. You just needed someone to show you how to look differently.

That is what a well-constructed prompt library does. It is not just a collection of pre-written questions. It is a set of lenses that teaches you to see the problem differently. Each prompt shows you the logic behind it — why context matters here, why this framework fits this challenge, why structuring the output this way produces something more useful. Once you have seen that logic a few times, you start applying it instinctively. And that is when the real transformation happens.

Why the library is a starting point, not a destination

I fully expect someone who uses the 360+ Workplace Prompt Library to eventually realize there is a better way of doing it than what I have provided — because they will know their exact situation, their end goal, and which frameworks fit their organization better than any generic starting point can. The prompts are the education. The methodology is what you take away. And once that methodology is internalized, you will write prompts that are more specific, more grounded, and more effective than anything pre-built — because they will be built around your reality.

That shift — from dependency on a tool to ownership of a skill — is where the real professional value lies. Research from the NBER found that AI productivity gains were highest among those who internalized the underlying approach and adapted it to their specific context — not those who simply used the tools passively. The difference is prompting with intent versus prompting out of habit.

Your next step — start with the free sampler

The fastest way to experience this shift firsthand is to test it. A free 25-prompt sampler PDF is available to download — no obligation, no complexity. Work through even five of those prompts and you will begin to feel the difference between searching for an answer and directing an AI toward one. Each prompt includes the framework logic built in, so you can see exactly why it is structured the way it is. If you find value there, the full 360+ Workplace Prompt Library covers every major workplace function with real-world context and proven frameworks throughout. The technology was never the bottleneck. The instructions you give it were. Fix the instructions — and everything else follows.

The gap between generic and great AI output is not a technological gap. It is a prompting gap. And now you know exactly how to close it.

FREQUENTLY ASKED QUESTIONS

I’ve tried adding more detail to my prompts but I’m still getting mediocre results. What am I missing?

More detail alone is not enough — the right kind of detail is what matters. The most common gap is the absence of a framework. Rather than describing your task in more words, try anchoring your prompt to a recognized professional model your organization already uses — a Gartner matrix, a McKinsey framework, a specific ISO standard. When the AI has a structural reference point, the output shifts from generic advice to contextually grounded recommendations. If you are unsure where to start, the free 25-prompt sampler has the framework logic already built into each prompt — so you can see the difference immediately.

How is a purpose-built prompt library different from just Googling better prompts online?

Googled prompts are generic by design — written to work for the widest possible audience, which means they are optimized for no one in particular. A purpose-built workplace prompt library is different in two important ways. First, each prompt is constructed around a specific workplace function and a real professional challenge — not a hypothetical scenario. Second, the methodology behind each prompt teaches you how to think about the problem, not just what to type. Over time, you stop needing the library as a crutch and start writing your own high-quality prompts instinctively. That is the real outcome: not dependency on a tool, but the development of a durable skill.

Our team uses different AI tools — ChatGPT, Copilot, Claude. Will these prompting techniques work across all of them?Yes. The principles in this article — role assignment, context-setting, framework-grounding, format specification, and iterative refinement — are model-agnostic. They work because they address the fundamental dynamic of how AI language models process instructions, regardless of the platform. Lenny’s Newsletter on prompt engineering in 2025 confirms that the core techniques consistently improve output quality across all major AI platforms. The specific syntax may vary slightly between tools, but the underlying logic is consistent. A well-structured, framework-grounded prompt will outperform a vague one on any AI platform available today.

Recent Posts

The Science-Backed Connection Every Leader Needs to Understand The most direct lever for improving employee performance is not better processes or tighter metrics — it is trust. Neuroscientist...

Nobody Told Us AI Would Make Us Feel Like We're Bad at Our Jobs

Why AI gives HR professionals generic outputs — and why it was never your fault. If you're an HR professional finding that AI outputs feel generic, shallow, or unusable for real HR work — the...