Why AI gives HR professionals generic outputs — and why it was never your fault.

If you’re an HR professional finding that AI outputs feel generic, shallow, or unusable for real HR work — the problem is structural, not personal. General-purpose AI tools like ChatGPT and Claude produce statistically average responses when given vague inputs. HR is one of the most framework-dense disciplines in the professional world, built on specific methodologies — Gallup Q12, SHRM competency models, McKinsey organizational research, Kotter’s change framework — that AI will not apply unless explicitly instructed to. The gap between what AI produces by default and what professional-grade HR work requires is real, documented, and fixable. This is a prompting problem, not a capability problem. This article explains why it happens, what it feels like, and how to close the gap.

The 6pm Moment Nobody Talks About

You’re still at your desk. The office is empty. And the AI just produced something you’d be embarrassed to put your name on.

It’s 6:14pm on a Tuesday. The last of your colleagues filed out an hour ago. Your screen is the only light left in the open-plan office, casting that specific shade of pale blue that means you’ve been staring too long. On it: a ChatGPT window. A half-written hybrid work policy. And an output so generic, so hollow, so thoroughly devoid of anything resembling your actual organization, that you’ve read it three times just to confirm it’s as bad as you think it is.

It is.

You close the laptop. Gather your things. And somewhere on the drive home, in the quiet between the radio and the traffic, a thought surfaces that you’ve been trying to suppress for weeks: Maybe I’m just not cut out for this AI thing.

That thought is wrong. Completely, structurally, demonstrably wrong. But that doesn’t make it any less real. And the fact that you’re sitting with it quietly — not saying it out loud to anyone at work — is itself a signal worth paying attention to.

I’ve sat there too. Many times. Trying to write an email, build an executive brief, produce a PowerPoint that looks like something. Trying to find a framework that’s applicable to what I was working on — how do I create this, how do I approach that, how do I make this information visual and digestible rather than the same format I’ve used a hundred times before? We all scrambled from Google to ChatGPT looking for the holy grail. And at first, we looked at what came back and thought: oh, this is great. Then we started working with it. And we went: something’s wrong here. The problem isn’t that you can’t use AI. The problem is that nobody gave you the right starting point.

This piece exists because that 6pm moment is happening to HR professionals everywhere. Every night. In every industry. At every seniority level. And almost nobody is talking about it honestly.

HR Has Always Had to Prove Itself — And AI Just Made That Harder

The function that gets credit for nothing and blamed for everything just got handed another test it wasn’t prepared for

There is a particular kind of professional exhaustion that only HR people understand. It is the exhaustion of being simultaneously indispensable and invisible. Of being the first call when something goes wrong and the last invited when strategy is being set. Of carrying the emotional weight of an entire organization while being quietly expected to have none of your own.

Here is what I have observed across years working at the intersection of people, workplace, and organizational strategy: the people who end up in HR and workplace functions are not there by accident. Some of them set out from the beginning of their careers knowing exactly where they wanted to focus. Others fell into it or were pushed toward it. But whichever path they took, something shifts once they get there. They start deeply caring about the people they are looking to serve within their organization. That care becomes the whole point. All they want to do is do well, contribute more, grow their careers, and help the people around them.

HR has spent decades — literally decades — fighting for legitimacy. Fighting to be seen as strategic rather than administrative. Fighting to have a seat at tables that keep getting moved. The SHRM Body of Applied Skills and Knowledge framework exists largely because the profession needed to codify its own expertise just to be taken seriously. That is not a small thing. That is a function that had to formally prove it had a body of knowledge at all.

Against that backdrop, AI was supposed to be a turning point. Finally: a tool that could handle the administrative load and free up HR professionals to do what they were always being told they should be doing — “being more strategic.” The framing was almost condescending, if you think about it. The implication being that the reason HR wasn’t strategic was time constraints, not organizational respect.

So, when AI arrived and the outputs turned out to be generic, shallow, and unusable for real HR work — when the hybrid work policy sounded like something scraped from a 2019 LinkedIn post, when the engagement survey questions had all the emotional intelligence of a compliance form — it did not just feel frustrating.

It felt like failing another test.

And for a function that has been taking tests and being graded on them for its entire professional existence, that lands differently.

The Promise vs. The Reality: What We Were Told AI Would Do

“It’ll free up your time.” “It’ll make you more strategic.” Sure. But nobody mentioned the learning curve nobody would help you climb.

The narrative around AI in HR has been relentlessly optimistic in a way that, in hindsight, was almost designed to set people up for disappointment.

I remember the early days clearly. Everyone scrambled from Google to ChatGPT, looking for the holy grail of everything we were trying to do. What is the right framework? What is the way to approach this? How do I create that? And initially, we looked at what came back and thought it was great. But then when you start working with what was produced, you looked at it and thought — something’s wrong here. The early models had a lot of hallucinations. A lot of invented statistics. A lot of things that weren’t quite accurate. Faith dropped fast. And it needed to. Because in HR, where your credibility depends on standing behind what you present, an answer you can’t verify is an answer you can’t use.

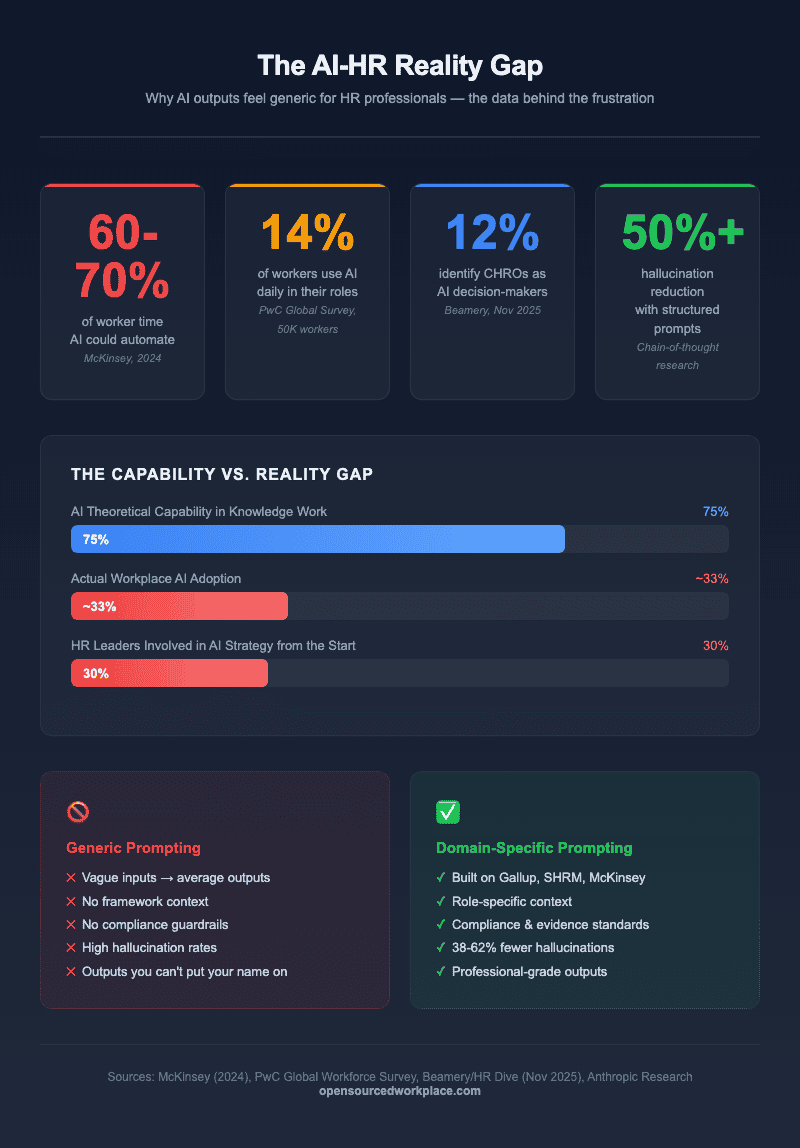

According to a 2024 report from McKinsey & Company , generative AI has the potential to automate up to 60–70% of the time workers spend on tasks. That sounds liberating. But read the small print: the tasks most amenable to automation are the ones requiring the least expertise. HR is almost entirely composed of tasks requiring significant expertise. Interpreting organizational dynamics. Building legally sound frameworks. Reading between the lines of an engagement survey to understand what people are saying. None of that automates.

, generative AI has the potential to automate up to 60–70% of the time workers spend on tasks. That sounds liberating. But read the small print: the tasks most amenable to automation are the ones requiring the least expertise. HR is almost entirely composed of tasks requiring significant expertise. Interpreting organizational dynamics. Building legally sound frameworks. Reading between the lines of an engagement survey to understand what people are saying. None of that automates.

The expectation was that AI would allow people to meet deadlines faster, produce better content, and create more digestible information for the organization to use. But when you don’t have faith that AI will get you there faster — when every attempt feels like treading water, stuck in the mud — the instinct is not to give up on AI entirely. It’s just to stop using it for the thing that isn’t working. And quietly go back to what you know.

A 2025 Gartner survey of HR leaders found that while 61% of HR functions were in advanced stages of implementing generative AI, 88% of HR leaders said their organizations had not yet realized significant business value from AI tools. Think about that number. Adoption is high. Results are not. And the gap between those two realities is where most HR professionals are living right now.

found that while 61% of HR functions were in advanced stages of implementing generative AI, 88% of HR leaders said their organizations had not yet realized significant business value from AI tools. Think about that number. Adoption is high. Results are not. And the gap between those two realities is where most HR professionals are living right now.

We all scrambled from Google to ChatGPT looking for the holy grail. At first, we looked at what came back and thought it was great. Then we started working with it. And we went: something’s wrong here.

The AI-HR Reality Gap: Why generic prompting fails for HR professionals

What a Failed AI Output Actually Feels Like When You’re in HR

It’s not just frustrating. It lands somewhere much more personal than that.

There is a difference between how a software engineer experiences a bad AI output and how an HR professional experiences one. The engineer looks at the code the model produced, identifies the error, corrects it, and moves on. The bad output is a technical problem with a technical fix.

For an HR professional, the relationship is more fraught. Because HR work is, at its core, interpretive. It requires judgment built over years of sitting in difficult conversations, watching organizations go through change, reading the dynamics of leadership teams. When an AI produces a performance management framework with no nuance, no sensitivity to context, no awareness of how those conversations land with real human beings — it doesn’t just produce an unusable document.

It produces a document that feels like a caricature of your profession.

Let me give you a specific example. I lack natural skills in design and visual presentation of information. I have deep knowledge of my subject matter — but putting it on a slide that looks compelling has never been my strength. AI was supposed to fix that. I tried, repeatedly, to use it to improve the presentation, the graphics, the messaging of my slides. It just took so long. I couldn’t get it to do what I needed in the time I had. And so, I stopped. Not stopped using AI entirely — but stopped trying to use it for that problem. I went back to my routine, mundane PowerPoint presentations.

And I wasn’t alone. Others tried the same thing and hit the same wall. The issue wasn’t lack of effort or lack of intelligence. The issue was that the tool wasn’t producing what was needed, fast enough, for the deadline that was real.

Why HR professionals are uniquely vulnerable to AI-induced impostor syndrome

Impostor syndrome has been extensively documented in HR. A 2023 study published in the Journal of Applied Psychology found that HR professionals report higher rates of impostor phenomenon than counterparts in finance, legal, and operations — a finding the researchers attributed to the inherently subjective nature of people work and the persistent perception of HR as a “soft” discipline.

found that HR professionals report higher rates of impostor phenomenon than counterparts in finance, legal, and operations — a finding the researchers attributed to the inherently subjective nature of people work and the persistent perception of HR as a “soft” discipline.

When AI arrives and produces soft, generic, unsubstantiated outputs — the exact outputs that reinforce the “HR is soft” perception — it activates the anxiety that was already there. The thought isn’t “this tool is inadequate.” The thought is: “maybe this tool is adequate, and I’m the one who’s inadequate.” That is impostor syndrome operating in its most insidious form: using the tool’s limitations as evidence of your own.

The Colleague Who Seems to Have Cracked It — And Why That Makes It Worse

You’ve seen their outputs. They look like they came from McKinsey. You can’t figure out what they’re doing differently.

You know the person. Maybe they’re in a different organization. Maybe they’re a connection on LinkedIn whose posts have started appearing in your feed. Their AI outputs look different. The hybrid work policy references actual frameworks. The engagement survey analysis has structural rigor. The executive brief looks like it cost ten thousand dollars to commission.

And you’re sitting there thinking: what do they know that I don’t?

The answer, almost universally, is not capability. It is not intelligence. It is not some innate technological fluency that some people were born with. The answer is the prompt. Specifically: whether the prompt they’re giving the AI contains enough domain context, enough framework reference, enough structural specificity to produce something that meets professional standards.

Generic prompts produce generic outputs. That is not controversial in AI research — it is foundational. A 2025 peer-reviewed study published in Frontiers in Artificial Intelligence found that vague, open-ended prompts produced hallucination rates of 38.3%, while structured prompts using chain-of-thought reasoning reduced that rate to 18.1% — a reduction of more than half. The person whose AI outputs look like McKinsey work is not smarter than you. They have a better starting point.

The dangerous assumption that good AI outputs come from being smarter, more strategic, or more tech-savvy

The conflation of good AI outputs with personal capability is one of the more corrosive dynamics happening in professional workplaces right now. It is particularly damaging in HR because the function already struggles with authority and perceived value.

When an HR professional sees a peer’s polished AI output and internalizes it as evidence of superior capability, two things happen. First, they feel diminished. Second, they often stop experimenting. They route around the friction and go back to familiar patterns — because the deadline doesn’t wait for the learning curve.

Neither of those responses is warranted. Both are entirely understandable.

The Impostor Syndrome HR Was Already Carrying Before AI Arrived

A function that has always had to justify its seat at the table doesn’t need another reason to question itself

The professional literature on HR’s relationship with organizational authority is, frankly, uncomfortable to read. Because it is so consistent.

A landmark study from the Academy of Management Journal found that HR professionals were significantly more likely than other functional leaders to doubt the strategic relevance of their own contributions — even when objective measures showed those contributions to be high-value. The researchers coined the term “legitimacy anxiety” to describe this pattern: a chronic, low-grade uncertainty about whether the function deserves the authority it is accorded.

found that HR professionals were significantly more likely than other functional leaders to doubt the strategic relevance of their own contributions — even when objective measures showed those contributions to be high-value. The researchers coined the term “legitimacy anxiety” to describe this pattern: a chronic, low-grade uncertainty about whether the function deserves the authority it is accorded.

That legitimacy anxiety did not appear from nowhere. It was built by decades of organizational culture that positioned HR as reactive rather than proactive, tactical rather than strategic, supportive rather than driving. It was reinforced every time an HR team was the last to hear about a restructure they were then expected to implement. Every time a culture program was defunded because it couldn’t be expressed in terms the CFO recognized. Every time an engagement survey was commissioned, the results were alarming, and nothing was done.

“Maybe I’m not strategic enough” — the thought HR professionals were already having long before ChatGPT existed

The thought that AI failures activate — “maybe I’m just not strategic enough” — is not a new thought. It is an old thought wearing new clothes. For many HR professionals, it has been a persistent background hum throughout their careers: a quiet question about whether they are as good as they believe themselves to be, triggered by every room where their contribution was undervalued, every meeting where their recommendations were overridden.

AI didn’t create this wound. It just pressed on it.

Understanding that is not a small thing. It is the beginning of being able to separate the question of your personal capability — which is not in doubt — from the question of tool design — which is very much in question.

Why Generic AI Was Never Going to Work for a Domain as Specific as HR

HR is not a general discipline. It is built on frameworks, research, and methodology that most AI tools have never been asked to apply.

Think about what it took to get good at what you do. The years of learning. The frameworks absorbed through experience and study. The ability to understand what a Gallup Q12 score is really telling you, or how to design a performance management framework that will hold up under real organizational pressure, or what a hybrid work policy needs to contain to be enforceable rather than aspirational.

Now think about what happens when you type “write me a hybrid work policy” into a general-purpose AI tool. The tool has no idea which frameworks to apply. No sense of your organization’s size, culture, industry, or current state. No instruction to reference any evidence base at all. And we’ve been trained for years to get answers from the internet by going to Google, typing a question, and getting back a result we could verify, cross-reference, click into. AI broke that contract. The answer arrived with no visible source. No “did you mean this?” No way to trace the reasoning. For anyone whose credibility depends on standing behind what they present, an answer you can’t trace is an answer you can’t use.

The hybrid work policy that actually lands in a board meeting doesn’t just list rules about remote days — it draws on Gartner’s digital workplace maturity model, on Leesman’s index of workplace effectiveness, on McKinsey’s change management research, on Kotter’s eight-step model for organizational transformation.

The engagement strategy that moves the needle in a company’s culture score doesn’t emerge from intuition alone — it is grounded in Gallup’s Q12 framework, in the SHRM competency model , in decades of peer-reviewed research on psychological safety.

, in decades of peer-reviewed research on psychological safety.

When you ask a general-purpose AI to produce expert-level HR work without telling it which expert-level frameworks to apply, you are asking a very capable tool to do a very specific job without the job description. The outputs are not bad because the tool is bad. They are bad because the instruction was incomplete.

The structural mismatch between how AI was designed and how HR professionals need to use it

I came from a background in media before moving into finance and corporate real estate. In media, everything was about double-sourcing. Everything was about editorial review — ensuring that every fact, every figure, every claim was valid and correct before it went anywhere near a reader. That mentality stayed with me. So, when AI gives me a statistic, I ask it: is this real? Show me the link. Show me the article. Verify the source.

That instinct is protective. But most HR professionals were never trained in a discipline that made verification a reflex. They were trained to trust their expertise and their relationships. And AI, in its early forms especially, gave confident-sounding answers that turned out to be invented. The trust eroded. And it hasn’t fully come back.

The broader AI research literature is consistent on this point: the quality of what a language model produces is determined largely by the quality of what it is given. Domain-specific prompting — prompts that reference specific methodologies, cite frameworks, and constrain the model’s output to expert parameters — consistently produces outputs that domain experts’ rate as significantly more credible and accurate than open-ended prompting. The implication is direct: the problem was never you. The problem was that you were given a general-purpose tool without domain-specific instructions and asked to produce domain-specific work.

The Specific Outputs That Hurt the Most

The hybrid work policy that could have been written in 2015. The engagement survey that reads like a template from a Google search. The onboarding program that has no framework, no rigor, and no soul.

There is a particular taxonomy of bad AI output that HR professionals recognize immediately — because they have seen it, generated it, and stared at it with a mixture of embarrassment and frustration.

The hybrid work policy that lists three to five bullet points about flexibility, trust, and communication without once referencing how meeting structures change, how performance management shifts, how synchronous and asynchronous work modes are governed differently. It sounds like a value statement, not a policy. It gives a manager no actionable guidance on a single difficult scenario they will face on day one.

The engagement survey that asks whether employees “feel valued” and whether their “manager supports their development” — questions so broad that any reasonable person could answer them differently depending on what they had for breakfast. Questions with no psychometric grounding, no Likert calibration, no connection to any established measurement framework.

The onboarding program that lists “welcome meeting,” “team lunch,” and “IT setup” as if those are the substance of a new employee’s first thirty days. No preboarding. No structured 30-60-90 framework. No connection to role clarity research. No psychological safety on ramp.

And here is the specific cruelty of being an expert confronted with these outputs: you cannot unknow what you know. You see every gap. You see the absence of framework. You see what’s missing. The more you know, the more it stings. And the more it stings, the louder that voice gets maybe I’m just not good enough at this.

Why hollow AI outputs sting harder when you know exactly what good looks like

A junior professional looking at a generic AI-produced onboarding program might not immediately see everything it’s missing. An experienced HR professional sees every gap. They see the failure to address role ambiguity, which the Journal of Vocational Behavior has consistently identified as one of the primary drivers of early attrition. They see the missing connection to SHRM’s onboarding best practices. The output is not just inadequate. It is inadequate in ways visible only to someone who is genuinely expert. And that visibility — paradoxically — makes it land harder. The more you know, the more it stings.

has consistently identified as one of the primary drivers of early attrition. They see the missing connection to SHRM’s onboarding best practices. The output is not just inadequate. It is inadequate in ways visible only to someone who is genuinely expert. And that visibility — paradoxically — makes it land harder. The more you know, the more it stings.

What “Knowing What Good Looks Like” Actually Tells You About Your Skills

The frustration you feel at a generic AI output is not a sign of weakness. It’s a sign of expertise.

This is the reframe that matters most, and it tends to arrive as a quiet revelation rather than a loud one.

The reason a bad AI output bothers you is because you know it’s bad. The reason you know it’s bad is because you have spent years — possibly decades — building the professional expertise that allows you to evaluate quality in your domain. The frustration is not a symptom of inadequacy. It is diagnostic evidence of mastery.

Think about the converse. A person with no HR knowledge looking at the same generic hybrid work policy would have no basis for critique. They would read “employees should communicate openly about their availability” and think, “yes, that sounds about right.” They lack the framework to see what’s absent. They lack the experience to know that “open communication about availability” without governance structures, without meeting norms, without clarity on synchronous expectations, produces not flexibility but chaos.

You see what’s absent. That is not a gap in your capability. That is your capability, operating exactly as it should.

Reframing the feeling: the tool hasn’t caught up to you — not the other way around

The most accurate description of the current state of AI applied to specialist HR work is not “the tool is powerful and users are underperforming.” It is: “the tool is powerful in ways that require expert prompting, and most professionals have not been given the expert prompts their domain requires.”

The gap is real. It is a prompting gap, not a competence gap. And it is fixable.

You Were Never Bad at This. You Were Given the Wrong Starting Point.

A vague prompt produces a vague answer. That’s not a reflection of your intelligence — it’s a reflection of how AI works.

Here is the most useful mental model I have found for understanding what AI is and how to use it well.

Think of AI like a new employee. A very capable new employee — one who has absorbed an extraordinary breadth of knowledge — but one who has just walked through the door and knows almost nothing about you, your organization, your context, or what specifically you need. If you ask that new employee on day one to produce your hybrid work policy, you will get the most generic version of a hybrid work policy that exists. Because they have nothing to go on. They are guessing at what you need based on what they have seen elsewhere.

But if you onboard them — if you walk them through the organization, explain the context, tell them which frameworks matter here, which leadership team they are presenting to, what standard the work needs to meet — the output changes completely. You almost have to take it through baby steps. Give it the information it needs to build the knowledge. And then ask it to give you the result that is going to be useful for your specific situation.

That is not a technology skill. That is a people skill. And it is one that every HR professional already has in abundance.

Language models are prediction engines. They predict what word, phrase, or sentence is most likely to follow what has come before, based on patterns in their training data. When you give them a vague input — “help me write a hybrid work policy” — they produce the statistical average of every hybrid work policy in their training data. That average is, by definition, the most generic version of the thing. It is the median, not the expert.

When you give the same model a structured, framework-anchored prompt — when you tell it to apply McKinsey’s organizational health model, to incorporate Gartner’s digital workplace maturity criteria, to produce a policy that reflects the Leesman Index’s findings on activity-based working, to include a Kotter-structured change management timeline — it produces something calibrated to expert-level source material. The output quality is not about the tool’s capability. It’s about the richness of the instruction.

— it produces something calibrated to expert-level source material. The output quality is not about the tool’s capability. It’s about the richness of the instruction.

You were never bad at this. You were given a key and pointed at the wrong door.

The Shame Lifts When You Realize How Many People Are Feeling Exactly This

You are not alone in this 6pm moment. Not even close.

There is a specific comfort in knowing that your experience is not singular. That the thing you have been experiencing in private — in the quiet of a nearly empty office — is a shared professional reality.

And here is the data point that perhaps cuts deepest. A November 2025 Beamery report covered by HR Dive found that only 12% of respondents identified CHROs as among the two most influential decision-makers on AI strategy in their organizations. The CEO ranked first at 81%, followed by the digital transformation lead at 50% and the CIO at 36%. Meanwhile, only 30% of HR leaders said they were involved in AI strategy from the outset — compared to 60% of other C-suite leaders who believed HR was involved from the start. That is not a gap. That is a chasm.

found that only 12% of respondents identified CHROs as among the two most influential decision-makers on AI strategy in their organizations. The CEO ranked first at 81%, followed by the digital transformation lead at 50% and the CIO at 36%. Meanwhile, only 30% of HR leaders said they were involved in AI strategy from the outset — compared to 60% of other C-suite leaders who believed HR was involved from the start. That is not a gap. That is a chasm.

Think about what that means in practice. HR professionals are being asked to navigate the most significant transformation of work in a generation — to help employees adapt, to redesign roles, to manage the human fallout of automation — while being systematically excluded from the strategic conversations shaping what that transformation looks like. They are handed the consequences without the context. They are expected to lead without being invited to plan. And then, on top of all of that, they are expected to become fluent in AI themselves. On their own time. On top of the day job that never stops.

A separate PwC Global Workforce Survey of nearly 50,000 workers across 48 countries found that 54% had used AI in their roles in the past twelve months, but only 14% were doing so daily. The gap between adoption and genuine fluency is wide across every function. In HR, that gap carries a specific emotional weight — because the stakes of getting it wrong are not abstract. They are people.

of nearly 50,000 workers across 48 countries found that 54% had used AI in their roles in the past twelve months, but only 14% were doing so daily. The gap between adoption and genuine fluency is wide across every function. In HR, that gap carries a specific emotional weight — because the stakes of getting it wrong are not abstract. They are people.

Why the most important thing the HR community can do right now is stop pretending this is easy

Cultures of performance — and HR functions are often deeply embedded in them — have a way of rewarding the performance of competence over the acknowledgment of uncertainty. It is often safer to pretend you have figured something out than to admit you haven’t. When everyone does that simultaneously, the community collectively sustains a fiction that makes every individual member feel more alone.

I have been in rooms where everyone performed confidence about AI that did not match what was happening on anyone’s laptop at 6pm. I have watched talented, committed HR professionals quietly route around a tool that wasn’t working and go back to their old way — not because they gave up, but because the deadline was real and the tool wasn’t delivering fast enough. And then those same people went to the next meeting and nodded along with the AI transformation narrative.

The honest conversation is more useful than the polished one. Always.

What Changes When AI Finally Speaks HR’s Language

Gallup. SHRM. McKinsey. Kotter. Leesman. These aren’t just references — they’re the credibility layer that makes HR work land in a boardroom.

There is a specific kind of relief that comes when you get an AI output that meets your standard. It is not just the absence of frustration. It is something closer to the feeling of being understood — of having the tool finally grasp not just what you asked for, but the professional context in which you are asking.

And there is another dimension to this that does not get talked about enough. Whenever your present information the same way over and over again, people become numb to it — even if the content has changed. But when you create something that looks visually different, that captures the information in a new structure or format, people go that’s interesting. And they start reading the content. They engage with it differently. AI, when it is working properly with the right prompts, allows you to do that. It allows you to change how the information lands, not just what the information says. For anyone in HR whose job involves getting leadership to absorb and act on people data — that is not a small thing.

When an AI prompt is constructed to apply Kotter’s 8-step change model to a return-to-office implementation, and the output actually produces a phased change management timeline with coalition-building in phase two and short-term wins in phase six — that is a fundamentally different experience. When a performance management prompt cites SHRM’s performance management best practices and produces a framework that would hold up to executive scrutiny — you stop revising and start using.

The frameworks are not decorative. They are the credibility architecture that makes HR work legible to organizational leaders who were educated in business schools that taught McKinsey, Gartner, and Kotter. When your output references the same intellectual frameworks, they were trained in, it does not just improve the quality of the content. It improves the perceived authority of the professional who produced it.

From 6pm shame to 9am confidence: what becomes possible when the starting point is right

The shift is not dramatic or sudden. It is gradual and accumulative. You get one output that you don’t need to rewrite. Then another. Then you start arriving at meetings with documents that prompt different conversations — not “this is a good starting point, but” conversations, but “how do we implement this” conversations.

That shift, compounded over weeks and months, changes your relationship with the tool from adversarial to collaborative. And it changes something quieter and more important: your relationship with your own expertise. Because when the tool starts producing outputs that reflect your standards, the implicit message changes from “you’re not sophisticated enough for this” to “you brought the sophistication to this.” The tool was always capable. You were always capable. The missing piece was the bridge between the two.

A Final Word to Every HR Professional Still Sitting at That Desk

The problem was never you. It was always the prompt.

Whatever you have been thinking about yourself and AI — whatever quiet conclusion you’ve started drawing about your own technological fluency, your own strategic capability, your own readiness for the future of work — consider a direct counterargument.

You are operating in one of the most complex professional disciplines that exists. You are working with frameworks that took decades of academic research to develop. You are producing outputs that affect people’s livelihoods, their wellbeing, their sense of belonging in the organizations they spend most of their waking hours inside.

Here is what I know to be true after years working across corporate real estate, workplace strategy, and the people function: the individuals who end up in HR are there because they care. Whether they chose it from the start or arrived there through a different path — something shifted, and they started deeply caring about the people they were there to serve. All they want to do is do well, contribute more, help more people, and grow their ability to do all of those things.

Having access to AI tools that work — that produce framework-grounded, credible, professional-grade outputs — allows people in these roles to contribute more to the organization and help more employees. It allows the organization to make faster, better decisions that are aligned with strategy and that serve people well.

That is the whole point. Not the technology. Not the prompts. Not the frameworks. The people at the end of every decision HR makes.

Remember, people work in these departments because they care about people. And these tools, used properly, allow people to serve more people.

The HR professionals who are getting exceptional outputs from AI are not more capable than you. They have better prompts — prompts grounded in the frameworks you already know: Gallup, SHRM, McKinsey, Gartner, Leesman, Kotter — written for the professional context in which you work.

The 6pm shame is real. The frustration is real. The quiet question about whether you’re good enough is real. But none of those things are evidence of what you think they’re evidence of.

They are evidence of how much you care. Of how high your standards are. Of how deeply your expertise runs — deep enough to feel the gap between what AI produces and what your profession demands.

That gap is closable. It always was.

The problem was never you. It was always the prompt.

Related Questions

Why do my AI outputs for HR work feel generic and unprofessional?

Generic AI outputs in HR are almost always a prompting problem, not a tool problem. Large language models produce statistically average outputs when given vague inputs. HR is a domain built on specific frameworks — Gallup, SHRM, McKinsey, Gartner, Kotter, Leesman — and AI needs to be explicitly instructed to apply those frameworks to produce professional-grade work. Think of it like onboarding a new employee: you have to walk the tool through your context, your standards, and your audience before you can expect it to deliver at the level your expertise demands.

How can HR professionals use AI more effectively without being tech experts?

Effective AI use in HR does not require technical expertise. It requires professional expertise — which HR professionals already have. The key is learning to translate your domain knowledge into prompt structure: explicitly naming the frameworks you want applied, specifying the audience and context, defining the format of the output, and setting the evidence standard you require. Pre-built, framework-grounded prompts written specifically for HR functions are the most efficient starting point for professionals who want expert outputs without the trial-and-error learning curve.

Is AI making HR professionals’ jobs easier, or is that a myth?

The honest answer is it depends entirely on how it’s being used. For HR professionals with access to framework-grounded, domain-specific prompts, AI is genuinely transformative — compressing hours of drafting and research into minutes while maintaining professional standards. For HR professionals using general-purpose prompts with no domain context, AI is adding time and frustration, not removing it. The technology has the potential to be the most powerful tool HR has ever had. Whether that potential is realized depends on whether the prompting infrastructure that unlocks it is accessible to the people who need it most.

Ready to Close the Gap?

We built The Workplace AI Prompt Library — 360+ prompts grounded in the frameworks HR professionals already know: Gallup Q12, SHRM, McKinsey 7-S, Kotter, Leesman, and more. Every prompt includes built-in citation requirements, compliance context, and role-specific structure — so the AI finally speaks your language.

No more staring at a blank text box. No more generic outputs. Just professional-grade AI results that you can actually put your name on.

Recent Posts

The Science-Backed Connection Every Leader Needs to Understand The most direct lever for improving employee performance is not better processes or tighter metrics — it is trust. Neuroscientist...

Stop Blending Portfolio Strategy With Workplace Strategy: Where One Ends and the Other Begins

Most organizations don’t set out to conflate their portfolio and workplace strategies. It happens gradually, subtly, as language blurs and responsibilities overlap. A real estate team starts...